Brain-computer interface (BCI) technology and related threats

Do you believe in the right to mental privacy? We’re guessing you do. What if I told you that this right stood a chance of violation? Emerging technology is likely to render our private thoughts extinct. This threat arises in the form of the nwely emerging Brain Computer Interfaces (BCI).

What is a BCI and how does it work?

Brain-computer interfaces (BCI) serve as direct links between the highly complexed human brain and some external device. Their primary use is in research, mapping, assisting or repairing human sensory and cognitive disabilities.

There’s a threat here because brain-computer interfaces are now available on a commercial level and are being used on a large scale (for example, B-Alert Headset by ABM, Epoch headset by Emotiv). As such, they could potentially be used to extract our minds for data, leaking information which supposed to be private.

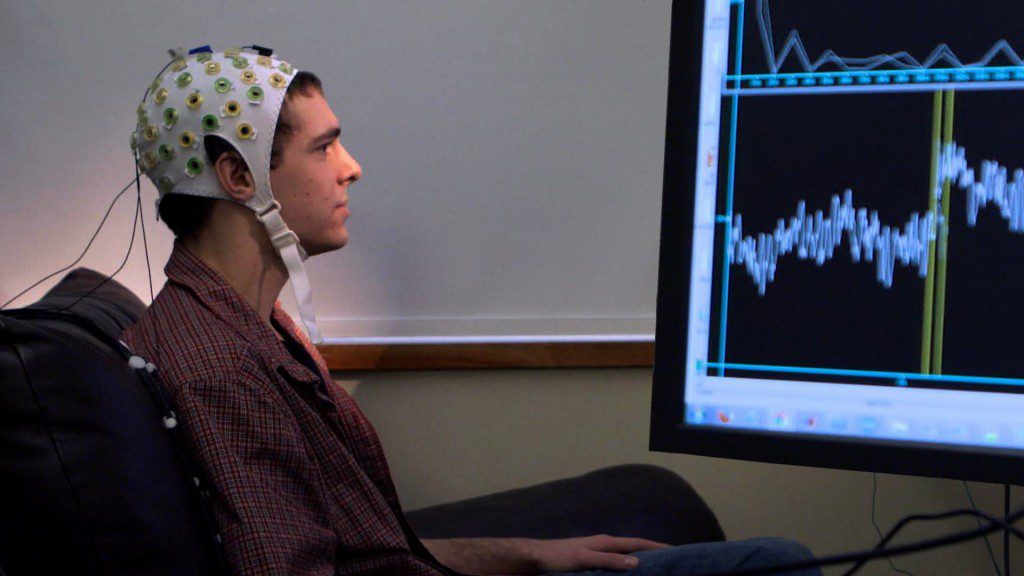

Electroencephalography (EEG) is used to monitor and trace the electrical activity in the brain. Due to its ease of use and the non-invasiveness of the task, EEG-based signals are being popularly used for BCI inputs. This technology is being used to improve user experience in the gaming and entertainment world.

The threat

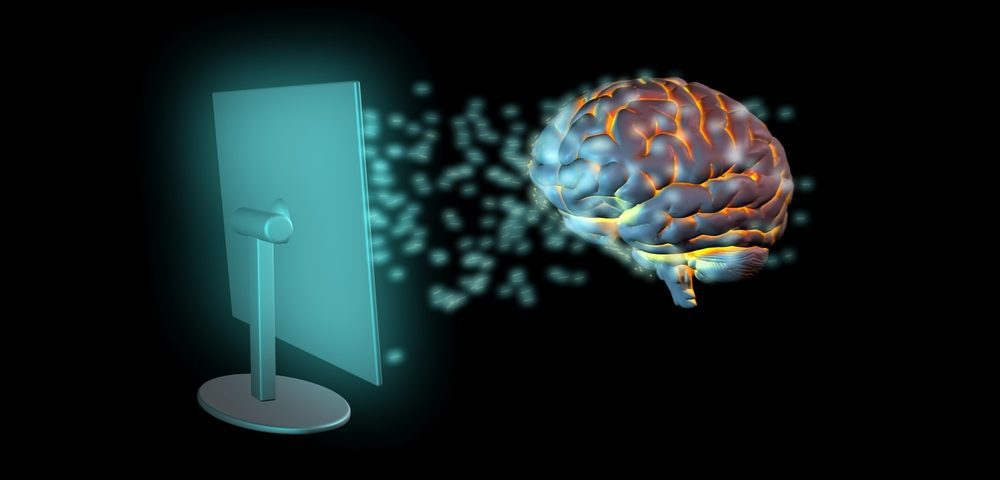

Given the ever increasing popularity of this tech, it’s important to be wary of the security risks associated with it. This is because EEG signals could be very conveniently interpreted to extract somebody’s private data. A user may, while wearing a BCI device, enter a pin/password or any other private information on a keyboard or other devices. There are certain applications which can interpret the keystrokes to re-generate this private data. Further, studies have been conducted where EEG signals have been used to know what the object the user is thinking about or the task he/she is performing.

Related: Brain Prints Could Be The Next Big Security Tool

As per the studies by the University of Alabama, hacking a BCI device could highly increase the chances of guessing the pin entered by the user (to almost 1 in 20 chances from 1 in 10,000). Though the Epoch headset developer, Emotiv has denied these claims, as per a security researcher with IOActive (Alejandro Hernández), this kind of decoding is 100% achievable. It’s just a matter of when.

The misuse is not only limited to malicious hackers or destructive individuals. BCIs could also be exploited by governments or consumer product industries as well. This is possible because with electrodes attached to your brain, it becomes easier to capture your emotional response to an advertisement or a logo which appears on screens. By capturing such responses, there’s enough room for you to build a profile on an individual. According to Bonaci and Chizeck, the most serious threat associated with BCI technology is advertising, infringing on user privacy.

Conclusion

Sure, BCI has plenty of benefits that likely overweigh its threats right now. But there’s no denying that in the near future, there’s going to be critical need to ensure, and protect user privacy for people using BCIs. Unfortunately, not much seems to be happening on that front. The BCI Anonymizer, a prototype BCI device – claims to filter out all private information. How it manages to do that, we’re not sure. It remains in its propositional stage. It is the need of the hour that such technology is brought within the ambit of some strict regulations.