Google has been one of the pioneers of self-driving vehicles, and several automakers have followed its lead. The concept behind the cars is certainly an intriguing one, especially when you consider these vehicles could potentially keep people safer by preventing more accidents than humans could, all while driving safely under their own power.

However, some people have raised questions about possible ethical dilemmas associated with these high-tech vehicles. They often bring up the bystander effect, a socio-cultural phenomenon well-known in psychology. The bystander effect describes how people are less likely to assist others in need when there are many other individuals present. What if self-driving cars could become so adept at avoiding accidents that people no longer feel like they need to step in and help injured passengers?

The Bystander Effect and Accidents

If you’re driving a car or riding a bike and encounter an accident, do you feel morally responsible for making sure the people involved are okay? Many people say they do. Furthermore, if you’re trained in CPR, first aid or other medical treatments, you may be under a professional obligation to stop and use whatever skills are required, even if you’re off duty.

But, consider the realistic possibility that self-driving vehicles could be programmed to automatically summon emergency personnel in the unlikely event of an accident. If that regularly happens because self-driving cars have become commonplace, passersby may just assume help is definitely on the way, and not even bother pausing to see if assistance is needed. That’s the bystander effect in action.

Keep in mind; it may not be readily distinguishable if a car is a self-driving or traditional model. If people already feel hesitant about pitching in at an accident scene, they may just assume their help is not required and stroll confidently on their way without even considering if the car has the ability to help its passengers.

Saving the Majority While Sacrificing Passengers?

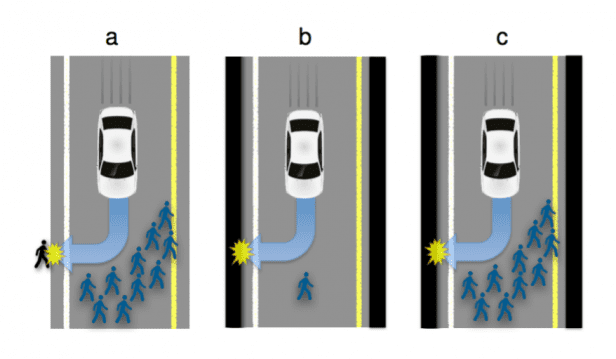

Another theory relates to self-driving cars and bystanders. Consider if a self-driving car swerves to avoid hitting a group of eight schoolchildren who weren’t paying attention before crossing the street, but the movement of the car is so severe it kills the passenger sitting in the vehicle. If the vehicle saved eight children and killed one adult, would you say it was programmed to make the right decision?

A psychologist named Jean-François Bonnefon, along with a team of researchers, surveyed around 900 study participants about collision scenarios. When asked about driver less cars, about 75 percent of the respondents thought it was preferable to sacrifice a vehicle’s passenger for the sake of saving only one pedestrian.

The ethical dilemma above is just one of many currently facing self-driving car manufacturers today. The trolley problem and tunnel problem are two more examples where coders must choose how to program their cars’ reaction. With the current number of vehicular fatalities, waiting for self-driving cars to be 99% safe disregards the fact that many of these accidents could have been prevented.

Figuring Out who’s Responsible

As it stands, people argue many individuals or companies could be liable in the event of an accident: The driver, the manufacturer of a particular part, the developer of the car’s software package or the automaker. In a pioneering move, Volvo has set an example by being one of the first companies to take full responsibility for accidents regarding driver less cars, as long as the crashes are related to design flaws.

Some analysts believe Volvo is doing to speed the regulatory process that must take place before these cars will be widely available. Perhaps people seated in driver less cars made by Volvo may feel they’re doing the morally correct thing by picking their cars because the company agreed to be accountable if certain problems occur.

It remains to be seen whether the ethical dilemmas explored here will become realities once driver less cars start populating the roads more frequently. However, it seems inevitable that, if these vehicles become as commonplace as engineers suggest, we could find ourselves in the midst of an undeniable technological innovation.